Ceph: Difference between revisions

| Line 62: | Line 62: | ||

[[File:ceph_replication_size.png|800px]] | [[File:ceph_replication_size.png|800px]] | ||

{{Note|text=Figure from http://ceph.com/papers/weil-thesis.pdf (page 150).}} | |||

== Configure Ceph on clients == | == Configure Ceph on clients == | ||

Revision as of 12:01, 13 January 2015

Introduction

Ceph is a distributed object store and file system designed to provide excellent performance, reliability and scalability. - See more at: http://ceph.com/

Ceph architecture

Grid'5000 Deployment

| Sites | Size | Configuration | Rados | RBD | CephFS | RadosGW |

|---|---|---|---|---|---|---|

| Rennes | ~ 9TB | 16 OSDs on 4 nodes |

Configuration

Generate your key

In order to access to the object store you will need a Cephx key. See : https://api.grid5000.fr/sid/storage/ceph/ui/

Your key will also available from the frontends :

[client.jdoe] key = AQBwknVUwAPAIRAACddyuVTuP37M55s2aVtPrg==

Note : Replace jdoe by your login.

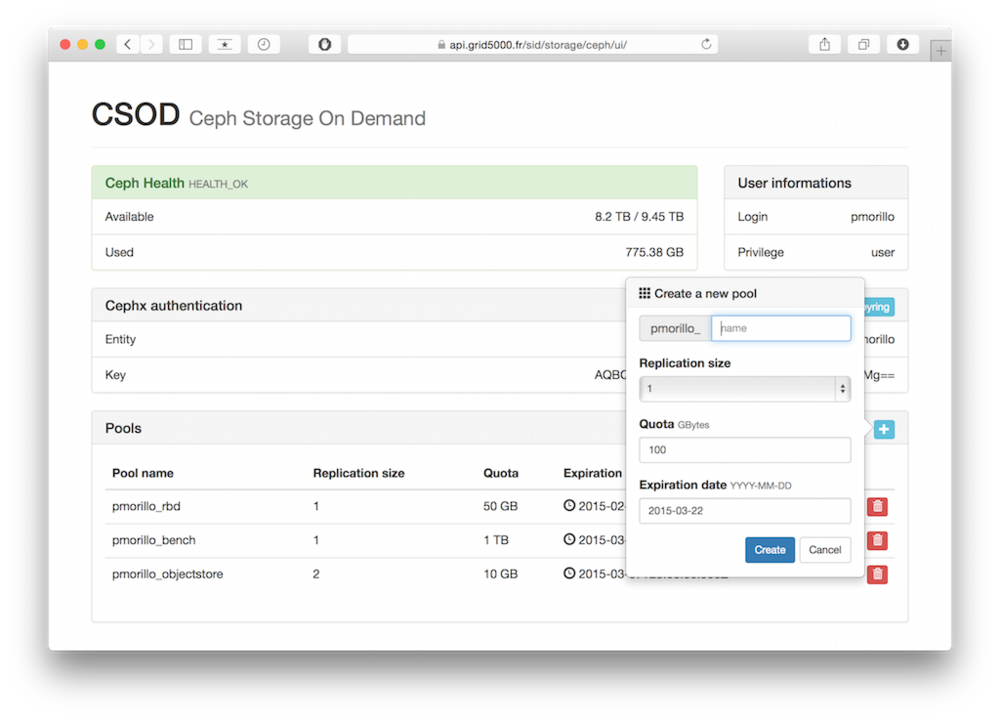

Create/Update/Delete Ceph pool

Requierement : Generate your key

Manage your Ceph pools from the Grid'5000 Ceph frontend : https://api.grid5000.fr/sid/storage/ceph/ui/

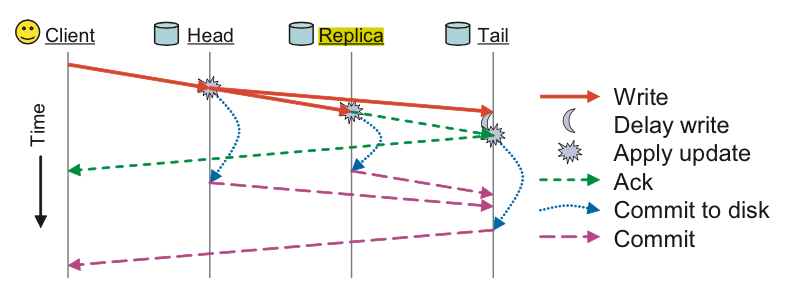

Replication size

- 1 : No replication (not secured, most efficient for write operations)

- n : One primary object + n-1 replicas (more security, less efficient for write operations)

| Note | |

|---|---|

Figure from http://ceph.com/papers/weil-thesis.pdf (page 150). | |

Configure Ceph on clients

On a deployed environment

Create a ceph configuration file /etc/ceph/ceph.conf :

[global] mon initial members = ceph0,ceph1,ceph2 mon host = 172.16.111.30,172.16.111.31,172.16.111.32

Create a ceph keyring file /etc/ceph/ceph.client.jdoe.keyring with your keyring :

[client.jdoe] key = AQBwknVUwAPAIRAACddyuVTuP37M55s2aVtPrg==

On the frontend or a node with production environment

| Note | |

|---|---|

The ceph version on frontend and production environment is old. Object Store access works, but not the support of RBD in Qemu/KVM. | |

Create a ceph configuration file ~/.ceph/config :

[global] mon initial members = ceph0,ceph1,ceph2 mon host = 172.16.111.30,172.16.111.31,172.16.111.32

Create a ceph keyring file ~/.ceph/ceph.client.jdoe.keyring with your keyring :

[client.jdoe] key = AQBwknVUwAPAIRAACddyuVTuP37M55s2aVtPrg==

Usage

Rados Object Store access

Requierement : Create a Ceph pool • Configure Ceph on client

From command line

| Note | |

|---|---|

Add | |

Put an object into a pool

List objects of a pool

Get object from a pool

Remove an object

Usage informations

pool name category KB objects clones degraded unfound rd rd KB wr wr KB pmorillo_objectstore - 1563027 2 0 0 0 0 0 628 2558455 total used 960300628 295991 total avail 7800655596 total space 9229804032

From your application (C/C++, Python, Java, Ruby, PHP...)

See : http://ceph.com/docs/master/rados/api/librados-intro/

RBD (Rados Block Device)

Requierement : Create a Ceph pool • Configure Ceph on client

Create a Rados Block Device

Create filesystem and mount RBD

id pool image snap device 1 jdoe_pool <rbd_name> - /dev/rbd1

Filesystem Size Used Avail Use% Mounted on /dev/sda3 15G 1.6G 13G 11% / ... /dev/sda5 525G 70M 498G 1% /tmp /dev/rbd1 93M 1.6M 85M 2% /mnt/rbd

Resize, snapshots, copy, etc...

See :

- http://ceph.com/docs/master/rbd/rados-rbd-cmds/

- http://ceph.com/docs/master/man/8/rbd/#examples

- http://ceph.com/docs/master/rbd/rbd-snapshot/

- rbd -h

QEMU/RBD

Requierement : Create a Ceph pool • Configure Ceph on client

Convert a qcow2 file into RBD

debian7