Nipype: Difference between revisions

No edit summary |

No edit summary |

||

| Line 80: | Line 80: | ||

<code>workflow.run(plugin=PLUGIN_NAME, plugin_args=ARGS_DICT)</code> | <code>workflow.run(plugin=PLUGIN_NAME, plugin_args=ARGS_DICT)</code> | ||

=== Using Nipype's OAR plugin === | === Using Nipype's OAR plugin === | ||

| Line 85: | Line 86: | ||

For example, to execute the basic preprocessing example above with OAR resources, you need to call: | For example, to execute the basic preprocessing example above with OAR resources, you need to call: | ||

<code> | <code>preprocessing.run(plugin='OAR')</code> | ||

Note: you can also provide traditional oarsub arguments by using the <code>oarsub_args</code> parameter: | Note: you can also provide traditional oarsub arguments by using the <code>oarsub_args</code> parameter: | ||

<syntaxhighlight lang="python"> | <syntaxhighlight lang="python"> | ||

preprocessing.run(plugin='oar', plugin_args=dict(oarsub_args='-q default') | |||

</syntaxhighlight> | </syntaxhighlight> | ||

| Line 98: | Line 99: | ||

=== Using Nipype's Slurm plugin === | === Using Nipype's Slurm plugin === | ||

| Line 107: | Line 104: | ||

For example, to execute the basic preprocessing example above with Slurm resources, you need to call: | For example, to execute the basic preprocessing example above with Slurm resources, you need to call: | ||

<code> | <code>preprocessing.run(plugin='SLURM')</code> | ||

Other parameters supported by Slurm plugin are: | |||

* <code> sbatch_args </code>: command line args to be passed to sbatch | |||

* <code> template </code>: custom template file 'hello-world.sh' for batch job submission | * <code> template </code>: custom template file 'hello-world.sh' for batch job submission | ||

== Pydra== | == Pydra== | ||

Revision as of 17:01, 7 November 2023

| Note | |

|---|---|

This page is actively maintained by the Grid'5000 team. If you encounter problems, please report them (see the Support page). Additionally, as it is a wiki page, you are free to make minor corrections yourself if needed. If you would like to suggest a more fundamental change, please contact the Grid'5000 team. | |

Nipype

What is Nipype?

|

|

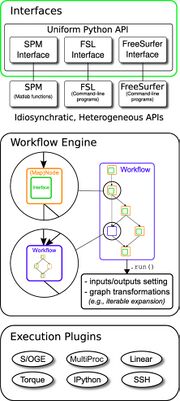

Nipype (Neuroimaging in Python Pipelines and Interfaces) is a flexible, lightweight and extensible neuroimaging data processing framework in Python. It is a community-developed initiative under the umbrella of Nipy. It addresses the heterogeneous collection of specialized applications in neuroimaging: SPM in MATLAB, FSL in shell, and Nipy in Python. A uniform interface is proposed to facilitate interaction between these different packages within a single workflow. The source code, issues and pull requests can be found here. The fundamental parts of Nipype are Interfaces, the Workflow Engine and the Execution Plugins, as you can see in the figure at the left:

Among the execution plugins, you can find |

Installation

pip can be used to install the stable release of Nipype:

It is recommended to install python dependencies within a virtual environment. To do so, execute the following commands before running the pip command:

Basic usage

Let's assume that you have previously installed Nipype's dependencies and you have installed fsl to process the Rhyme judgment dataset available on OpenNeuro.

Here, we present an basic example of performing pre-processing using Nipype:

import nipype.interfaces.fsl as fsl

from nipype import Node, Workflow

preprocessing = Workflow(name='preprocessing')

# using fMRI's linear image registration tool for intra-modal motion correction

mcflirt = Node(fsl.MCFLIRT(), name='mcflirt')

mean = Node(fsl.MeanImage(), name='mean')

# add Nodes to Workflow

preprocessing.connect(mcflirt, 'out_file', mean, 'in_file')

mcflirt.inputs.in_file = '/home/ychi/nipype/ds000003/sub-01/func/sub-01_task-rhymejudgment_bold.nii.gz'

# Workflow execution

preprocessing.run()

Using Nipype Plugins

As shown in the figure above, Nipype's workflow engine supports a plugin architecture for workflow execution. The available plugins, such as SGE, PBS, HTCondor, LSF, Slurm or OAR allow local and distributed execution of workflows and debugging.

All plugins can be executed by calling:

workflow.run(plugin=PLUGIN_NAME, plugin_args=ARGS_DICT)

Using Nipype's OAR plugin

For example, to execute the basic preprocessing example above with OAR resources, you need to call:

preprocessing.run(plugin='OAR')

Note: you can also provide traditional oarsub arguments by using the oarsub_args parameter:

preprocessing.run(plugin='oar', plugin_args=dict(oarsub_args='-q default')

Other parameters supported by OAR plugin are:

template: custom template file 'hello-world.sh' for batch job submissionmax_jobname_len: maximum length of the job name. Default 15.

Using Nipype's Slurm plugin

For example, to execute the basic preprocessing example above with Slurm resources, you need to call:

preprocessing.run(plugin='SLURM')

Other parameters supported by Slurm plugin are:

sbatch_args: command line args to be passed to sbatchtemplate: custom template file 'hello-world.sh' for batch job submission

Pydra

Pydra is a part of the second generation of the Nipype ecosystem, which is meant to provide additional flexibility and reproducibility. Pydra rewrites Nipype engine with mapping and joining as first-class operations. However, Pydra does not have OAR Support. Examples and details of Pydra's OAR extension can be found here.