Grenoble:Network: Difference between revisions

(Created page with "{{Template:Site link|Network}} {{Portal|Network}} {{Portal|User}} {{Warning|text=This page is outdated. Updating it is a '''work in progress''' along with the installation of...") |

(OPA topology) |

||

| (27 intermediate revisions by 5 users not shown) | |||

| Line 3: | Line 3: | ||

{{Portal|User}} | {{Portal|User}} | ||

'''See also:''' [[Grenoble:Hardware|Hardware description for Grenoble]] | |||

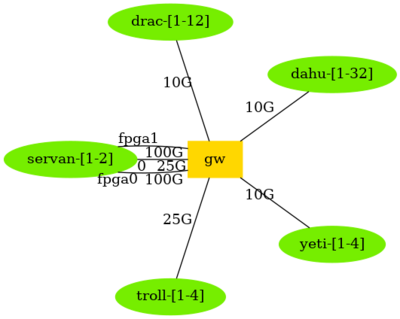

= Overview of Ethernet network topology = | |||

[[File:GrenobleNetwork.png|400px]] | |||

{{:Grenoble:GeneratedNetwork}} | |||

= HPC Networks = | |||

==== | == Infiniband 100G network == | ||

==== | An Infiniband 100G network interconnects nodes from the drac cluster, beside the Ethernet network. That Infiniband network is composed of a single switch. | ||

[[ | |||

The ''subnet manager'' of this Infiniband network is provided by the switch. | |||

* Switch: Mellanox SB7700 IB EDR / 100G | |||

* Host adapter: Mellanox MT27700 [ConnectX-4] dual-port | |||

* Each of the 12 drac nodes has two 100G connections to the switch | |||

== Omni-Path 100G network == | |||

The dahu, yeti and troll nodes are connected to a single Omni-Path switch (Intel Omni-Path 100Gbps), beside the Ethernet network. | |||

The fabric manager of this Omni-Path network is provided by one of the Grid'5000 service machines (digwatt). | |||

* Switch: Intel® Omni-Path Edge Switch 100 Series/H1048-OPF | |||

* Host adapter: Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe x16 | |||

* Each of the 44 dahu/yeti/troll nodes has one 100G connection to the switch | |||

(since 2021-03-01, this Omni-Path network interconnect is not any more shared between both the Grid'5000 Grenoble nodes and the nodes of the HPC Center of Université Grenoble Alpes (Gricad mésocentre), see the history of this page for information about the previous configuration). | |||

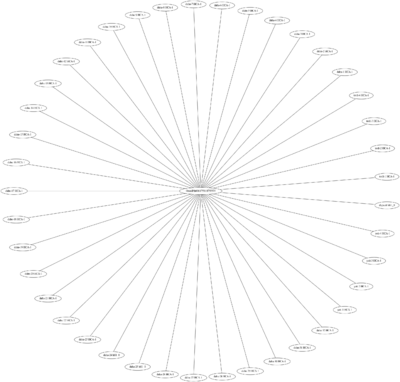

Topology, generated from <code>opareports -o topology</code>: | |||

[[File:topology-grenoble-omnipath-20211110.png|400px]] | |||

= IP Range = | |||

* Ethernet: '''172.16.16.0/20''' | |||

* HPC (Infiniband/Omni-Path): '''172.18.16.0/20''' (but Infiniband and Omni-path IPs are not reachable directly from one another) | |||

* Virtual: '''10.132.0.0/14''' | |||

Revision as of 15:14, 10 November 2021

See also: Hardware description for Grenoble

Overview of Ethernet network topology

Network devices models

- gw: Dell S5296F-ON

More details (including address ranges) are available from the Grid5000:Network page.

HPC Networks

Infiniband 100G network

An Infiniband 100G network interconnects nodes from the drac cluster, beside the Ethernet network. That Infiniband network is composed of a single switch.

The subnet manager of this Infiniband network is provided by the switch.

- Switch: Mellanox SB7700 IB EDR / 100G

- Host adapter: Mellanox MT27700 [ConnectX-4] dual-port

- Each of the 12 drac nodes has two 100G connections to the switch

Omni-Path 100G network

The dahu, yeti and troll nodes are connected to a single Omni-Path switch (Intel Omni-Path 100Gbps), beside the Ethernet network.

The fabric manager of this Omni-Path network is provided by one of the Grid'5000 service machines (digwatt).

- Switch: Intel® Omni-Path Edge Switch 100 Series/H1048-OPF

- Host adapter: Intel® Omni-Path Host Fabric Interface Adapter 100 Series 1 Port PCIe x16

- Each of the 44 dahu/yeti/troll nodes has one 100G connection to the switch

(since 2021-03-01, this Omni-Path network interconnect is not any more shared between both the Grid'5000 Grenoble nodes and the nodes of the HPC Center of Université Grenoble Alpes (Gricad mésocentre), see the history of this page for information about the previous configuration).

Topology, generated from opareports -o topology:

IP Range

- Ethernet: 172.16.16.0/20

- HPC (Infiniband/Omni-Path): 172.18.16.0/20 (but Infiniband and Omni-path IPs are not reachable directly from one another)

- Virtual: 10.132.0.0/14