Network reconfiguration tutorial: Difference between revisions

| Line 467: | Line 467: | ||

* set up network functions on reserved nodes (firewalls, load balancers) | * set up network functions on reserved nodes (firewalls, load balancers) | ||

Also note that | Also note that Nancy has nodes with four 10Gbps network interfaces (grisou), which might be useful for more complex setups. | ||

Revision as of 16:20, 18 October 2021

| Note | |

|---|---|

This page is actively maintained by the Grid'5000 team. If you encounter problems, please report them (see the Support page). Additionally, as it is a wiki page, you are free to make minor corrections yourself if needed. If you would like to suggest a more fundamental change, please contact the Grid'5000 team. | |

Introduction

This tutorial presents a example of usage of Grid'5000 which benefits from the capability of reconfiguration of the network topology of the platform, using KaVLAN.

KaVLAN is a Grid'5000 tool, which allows a user to manage VLANs in the platform. That mechanism can provide a layer 2 network isolation for experiments.

This tutorial will only use 3 nodes, but could be adapted for a usage at scale, with hundreds of nodes.

Three kinds of KaVLAN VLANs are available in Grid'5000. You can find more information in the KaVLAN page. In this tutorial, we will only use global and local VLANs (but no routed VLAN).

Before going further with the practical setup proposed in this page, it is strongly advised to read the KaVLAN page.

Topology setup

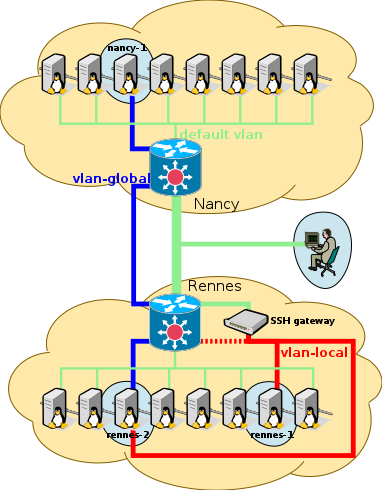

In this first part of the tutorial, we setup a topology, as presented in the following picture.

Nodes nancy-1 and rennes-2 are placed in a same "global" (inter-site) vlan, and rennes-2 is also placed in a same vlan as rennes-1 ("local" vlan).

Reservations of the resources

First of all we will create jobs to reserve:

- 2 nodes providing at least 2 network interfaces each, on one site

- 1 local VLAN on the same site

- 1 global VLAN

- 1 node on another site

In the text below, we use the sites of Rennes and Nancy (but other sites could be used, see below):

- Rennes for the 2 nodes with several network interfaces, and the local VLAN.

- Nancy for the other node

(we will discuss later about the global VLAN).

Consequently, practically speaking, you are advised to open 2 terminal windows (2 separate shells) for the tutorial, one for Rennes and one for Nancy.

In Rennes (Rennes terminal)

In Rennes, we have to reserve 1 local VLAN, and 2 nodes for instance of the paravance cluster, because that cluster is composed of nodes with 2 network interfaces (in order to find other Grid'5000 clusters providing such a characteristic, you can go to the Hardware page).

We also try to reserve the global VLAN in Rennes as well.

| Note | |

|---|---|

Please mind the fact that a global VLAN spreads all over Grid'5000, so we only need to reserve it on one site. | |

Since we have to work on 2 different sites, we will use OAR advance reservations in order to have 2 jobs that start and end at the same dates. Let's define STARTDATE as the start date (e.g. "2016-01-21 14:00:00", don't worry if it is in the past for a few minutes), and WALLTIME as the duration of the job (e.g. the duration for the tutorial).

Once logged to Rennes' frontend in our first terminal, we execute:

rennes:frontend:

|

oarsub -t deploy -l {"type='kavlan-global'"}/vlan=1+{"type='kavlan-local'"}/vlan=1+{"cluster='paravance'"}/nodes=2,walltime=WALLTIME -r "STARTDATE" |

Unfortunately, that command may fail (return KO):

- either because there are no more paravance nodes available for the time of the tutorial, or no more local VLAN: that should not be too likely to happen

- or because the global VLAN of Rennes is already taken: that is very likely to happen during a common tutorial session with many students !

In either case, one will have to try to use another site than Rennes, or try again later.

- If you face issue #1

Just replace Rennes by another site which has nodes with several interfaces (see the Hardware page to find such a site).

- If you face issue #2

Only reserve 1 local VLAN, and 2 nodes of the paravance cluster, but keep the global VLAN reservation for the other site (to simplify the writing of this tutorial, we assume that the global VLAN of Nancy is available, but any other site with a free global VLAN could do).

So, if the previous command fails because Rennes' global VLAN is not available, only execute:

rennes:frontend:

|

oarsub -t deploy -l {"type='kavlan-local'"}/vlan=1+{"cluster='paravance'"}/nodes=2,walltime=WALLTIME -r "STARTDATE" |

(and do not forget to reserve a global VLAN in the other site, as you will be proposed in the next section about the reservation in Nancy).

Either way, a successful oarsub command should have returned a JOBID.

Let's call our 2 nodes in Rennes rennes-1 and rennes-2 (e.g. you might have rennes-1 = paravance-23).

Now, let's retrieve the ID of our VLANs (local + global, or just the local one if the global VLAN is reserved elsewhere):

You can know which VLAN ID is global and which is local, using the following table:

| KaVLAN name in OAR | type | first id | last id |

|---|---|---|---|

| kavlan-local | local | 1 | 3 |

| kavlan | routed | 4 | 9 |

| kavlan-global | global | 10 | 21 |

(see KaVLAN for more details)

In Nancy (Nancy terminal)

If we managed to reserve the global VLAN in Rennes, we just need to reserve a single node in Nancy. In our second terminal, we log to Nancy's frontend, and execute:

If not, we also reserve Nancy's global VLAN. We execute:

nancy:frontend:

|

oarsub -t deploy -l {"type='kavlan-global'"}/vlan=1+nodes=1,walltime=WALLTIME -r "STARTDATE" |

Again, a successful oarsub command should have returned a JOBID (which of course may not be the same as the job ID in Rennes).

If the oarsub fails (returns "KO"), that means that you probably should try to use yet another site than Nancy, where the global VLAN would be available.

Let's call our node in Nancy nancy-1 (e.g. nancy-1 = graphite-3).

You can now look at which VLANs you get, as above.

Deployment of our operating system for the experiment

In Rennes (Rennes terminal)

Back on the Rennes terminal, we now enter our job in order to benefit from OAR environment variable $OAR_NODEFILE.

(JOBID is the one of the job of Rennes of course)

Then we launch the deployment of the Debian minimal environment to the nodes:

| Note | |

|---|---|

We choose to not use the --vlan | |

In order to be capable of doing ssh from one node to the other, we push our SSH private key to the nodes.

| Note | |

|---|---|

When the access to a node is broken due to an error in a network setup for instance, the Kaconsole tool becomes very handy: it allows to connect to the serial console of a node, quite the same way you would connect to a virtual console of a GNU/Linux workstation (CTRL+ALT+F1), to fix the network configuration for instance. Credentials for login on the console with our deployed environment is "root":"grid5000". | |

In Nancy (Nancy terminal)

We run from Nancy's frontend the same commands as in Rennes, but use the JOBID of Nancy of course.

Installation of useful extra packages for networking

In this tutorial we are going to need some extra packages which are not installed in debian11-min environment, such as tcpdump. Since we are going to modify the network configurations making the Internet unreachable from the nodes, we must install them beforehand.

Besides, you may wish to play with (or debug) your network configuration with other tools like ethtool, dig, or traceroute as well. If you want to use them later you need to install them now too.

In Nancy (Nancy terminal)

nancy:frontend:

|

ssh root@nancy-1 "apt-get update; apt-get install --yes at tcpdump dnsutils traceroute ethtool ipcalc" |

In Rennes (Rennes terminal)

In Rennes, since we reserved several nodes (hey, 2 is more than 1! :-) ), we will be using TakTuk for speeding up the process:

rennes:frontend:

|

taktuk -s -l root -f $OAR_FILE_NODES broadcast exec [ "apt-get update; apt-get --yes install at tcpdump dnsutils traceroute ethtool ipcalc" ] |

In addition, we will need OpenVSwitch on rennes-2 in the second part of the tutorial, so we install it too:

Setup of the network topology configuration

Thanks to KaVLAN, we will have a layer 2 network isolation for our nodes. That means that whatever IP configuration we set in our VLAN, there will be no risk to break the network configuration in the default VLAN or to disturb other users (for instance by misusing reserved IP addresses). We can even setup network services or protocols based on IP broadcast for instance (e.g. DHCP) with no risk of polluting the default VLAN (or conflicting with the platform's default VLAN DHCP service).

However, we must keep in mind that:

- We must be very careful to what VLAN the node is in at any time. Indeed, a mistake could quickly lead to some changes to the nodes network configuration while the node is actually still in the default VLAN, which could impact other users/experiments.

- While it can be interesting to manage by ourselves the IP addressing of the nodes in our VLAN, Grid'5000 provides predefined IP addresses for the nodes within any VLAN, which can be very handy (see the KaVLAN page), especially because they are served by a DHCP service within each VLAN. So both options are possible, and one has to weight the pros and the cons, depending on the actual need.

- Setting up our own IP addressing mechanism can actually be seen as an "expert mode", to which the

kavlancommand provides some support as well, allowing to disable the DHCP service in a VLANs if required. Please really mind what you do when in that expert mode. - If not using the IP addresses which are provided by Grid'5000, routing to Global VLANs and Routed VLANs will not work, and neither will SSH gateways to local VLANs. However, if all or some of those feature are not useful for your experiment, it is not harmful.

That said, for this tutorial and up until the openvswitch section, we will use the predefined IP addresses provided by Grid'5000. This will be very handy thanks to the DHCP services which are provided in the VLANs.

Furthermore, for the ease of reading this document, let be:

G_ID= the ID of the global VLAN we reservedL_ID= the ID of the local VLAN we reserved

In Nancy (Nancy terminal)

nancy-1 will be put in the global VLAN. Once in that VLAN, it will be able to ask for an IP address to the DHCP server of the global VLAN, which will answer the IP corresponding to nancy-1-kavlan-G_ID. See the table in the KaVLAN page to find out the corresponding IP address, or run:

The mechanism we have to trigger is the following:

- Change the VLAN of the node

- Restart the network service

Since our node won't be reachable with its old IP address (the one for the default VLAN) once put in the global VLAN, we won't be able to run action #2 after running action #1 as-is. We will use a trick to create a trigger before action #1, in order to run action #2 asynchronously. That can be done using the at command, for instance. Let's do that in one command line:

nancy:frontend:

|

ssh root@nancy-1 "echo 'service networking restart' | at now + 1 minute" && kavlan -s -i G_ID -m nancy-1.nancy.grid5000.fr --verbose |

In this way, the restart of the networking service will be scheduled before changing the VLAN, and actually done one minute after.

Another option would be to use Kaconsole, to access the node via its serial console to run action #2, but that is definitely not scalable to many nodes, so it is to be kept as a last resort option.

| Note | |

|---|---|

Feel free to make different tests, with | |

In Rennes (Rennes terminal)

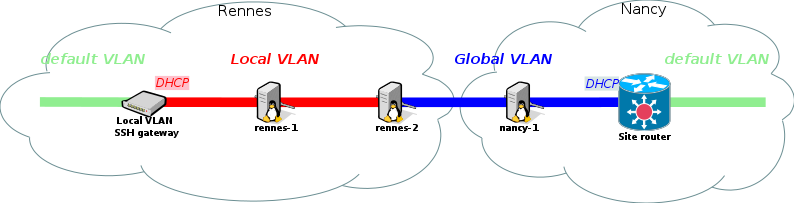

In Rennes, we want:

- to put

rennes-1andrennes-2default network interfaces (eth0/eno1 on paravance) in the local VLAN, and have their IP addresses changed accordingly. - to put

rennes-2secondary network interface (eth1/eno2 on paravance) in the global VLAN and have the IP address changed accordingly.

That way, rennes-2 will be in-between the local and the global VLANs, which is the heart of our setup.

For the first action, we do the same as for Nancy's node, but with the local VLAN instead of the global VLAN, and using taktuk to benefit from a parallel execution on the 2 nodes:

Notice that rennes-1 and rennes-2 are still accessible via SSH on the VLAN's gateway, named "kavlan-L_ID":

We now have to put rennes-2's secondary network interface in the global VLAN. That secondary interface can be referenced with the following hostname: rennes-2-eth1.

| Note | |

|---|---|

According to the information of the Grid'5000 API, for paravance nodes, eth1/eno2 is indeed cabled to the switch: see eno2 in https://api.grid5000.fr/sid/sites/rennes/clusters/paravance/nodes/paravance-1.json?pretty. | |

So, to do that, run the kavlan command:

Since the node is not reachable anymore from the default VLAN, we will have to go through the local VLAN to restart the network service. So let's bounce on the gateway to reach rennes-2, like this:

And finally, configure the interface and program a network restart in one minute:

Then exit the terminal before the networking service restarts, otherwise the SSH session will be lost and the terminal will freeze.

| Note | |

|---|---|

As eno2 is not configured by default in /etc/network/interfaces, we have to do it before restarting the network, otherwise this interface won't ask for a DHCP lease. | |

We are now done with the VLAN setup.

The following table summarizes the hostnames of the machines and the way to access them, depending on the VLAN they are in:

| nancy-1 | rennes-2 | rennes-1 | access from default VLAN | |

|---|---|---|---|---|

| default VLAN | nancy-1 |

rennes-2 |

rennes-1 |

|

| local VLAN | rennes-2-kavlan-L_ID |

rennes-1-kavlan-L_ID |

via SSH gateway: kavlan-L_ID in the local site

| |

| global VLAN | nancy-1-kavlan-G_ID |

rennes-2-eth1-kavlan-G_ID |

routed from any site |

First tests of the topology

We can now run some basic tests:

- Try to ssh to

nancy-1in the global VLAN from the frontend of any Grid'5000 site, then torennes-2and finally torennes-1 - Try to ssh to the SSH gateway of the local VLAN, then to

rennes-1, then torennes-2and finally tonancy-1 - Try to ping

rennes-2's IP in the global VLAN fromnancy-1 - Try to ping

rennes-2's IP in the local VLAN fromnancy-1 - Try to ping

rennes-1's IP in the local VLAN fromnancy-1 - Try to ping

nancy-1's IP in the global VLAN fromrennes-1

For that purpose, you will have to find out the hostnames and IP addresses of the machines in the VLANs ! See KaVLAN and the table above.

For instance, rennes-2 should be reachable with the hostname rennes-2-eth1-kavlan-G_ID.

The last 3 pings between nancy-1 and Rennes' local VLAN (either way) should fail at this point.

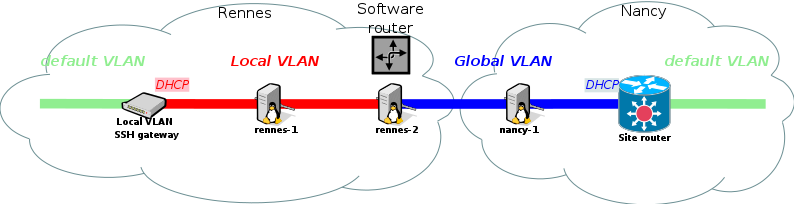

Setup of the IP routing between the 2 VLANs

rennes-1 and nancy-1 are in separate VLANs, so no IP networking is possible directly from rennes-1 to nancy-1 (or the way around).

To enable that, we will activate the IP routing feature of Linux on rennes-2. This in fact only consist in activating a sysctl, as follows:

But we also need to add a route on both rennes-1 and nancy-1, to make them know how to contact each other.

To find out the IP network of the local VLAN and of the global VLAN, we can either look at the tables in the KaVLAN page, or examine the IP configuration of the interfaces of rennes-1, with ip addr show and ip route show.

Let's have:

- the network for the global VLAN written

G_NETWORK/G_NETMASK - the network for the local VLAN written

L_NETWORK/L_NETMASK - the IP address of rennes-2 in the global VLAN written

G_IP_rennes-2 - the IP address of rennes-2 in the local VLAN written

L_IP_rennes-2

We can add on rennes-1 a route to the global VLAN as follows:

And on nancy-1 a route to the local VLAN as follows:

Routing should now be operational!

New tests with IP routing enabled

First, you can replay the previous tests and check that they all succeed now:

- Try to ssh to

nancy-1in the global VLAN from the frontend of any Grid'5000 site, then torennes-2and finally torennes-1 - Try to ssh to the SSH gateway of the local VLAN, then to

rennes-1, then torennes-2and finally tonancy-1 - Try to ping

rennes-2's IP in the global VLAN fromnancy-1 - Try to ping

rennes-2's IP in the local VLAN fromnancy-1 - Try to ping

rennes-1's IP in the local VLAN fromnancy-1 - Try to ping

nancy-1's IP in the global VLAN fromrennes-1

And to go further, we can observe packets with tcpdump.

For instance let's glance at the packets on our router.

We run on rennes-1 and rennes-2 the following commands:

(tcpdump is a network traffic sniffer, here filtering ICMP traffic on eno1)

On the first terminal you should see that the ICMP requests and replies are forwarded on rennes-2 :

listening on eno1, link-type EN10MB (Ethernet), capture size 262144 bytes 17:08:55.145241 IP 10.27.200.183 > 192.168.200.68: ICMP echo request, id 3498, seq 1, length 64 17:08:55.145356 IP 192.168.200.68 > 10.27.200.183: ICMP echo reply, id 3498, seq 1, length 64 17:08:56.146970 IP 10.27.200.183 > 192.168.200.68: ICMP echo request, id 3498, seq 2, length 64 17:08:56.147075 IP 192.168.200.68 > 10.27.200.183: ICMP echo reply, id 3498, seq 2, length 64 17:08:57.148675 IP 10.27.200.183 > 192.168.200.68: ICMP echo request, id 3498, seq 3, length 64 17:08:57.148782 IP 192.168.200.68 > 10.27.200.183: ICMP echo reply, id 3498, seq 3, length 64

If ip forwarding was disabled on rennes-2 (see above the sysctl command to disable it with a '0' instead of a '1'), tcpdump will only see ping requests (not ping replies because rennes-2 doesn't transmit anything to nancy-1), and ping should fail.

We also check the packet route with traceroute from rennes-1:

We should see two hops: the intermediary router and the target:

traceroute to grisou-9-kavlan-16.nancy (10.27.209.9), 30 hops max, 60 byte packets 1 paranoia-8-eth0-kavlan-1.rennes.grid5000.fr (192.168.194.8) 0.161 ms 0.167 ms 0.148 ms 2 grisou-9-eth0-kavlan-16.nancy.grid5000.fr (10.27.209.9) 24.852 ms 24.834 ms 24.816 ms

The first hop is rennes-2, and the second one is nancy-1.

With tcpdump we can check the complete isolation of nodes from the production VLAN (and from any other one) :

On rennes-1 (in a 12 seconds time):

listening on eno1, link-type EN10MB (Ethernet), capture size 262144 bytes 17:26:28.053495 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43 17:26:30.053550 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43 17:26:32.053496 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43 17:26:34.053499 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43 17:26:36.053565 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43 17:26:38.053505 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43 17:26:40.053525 STP 802.1w, Rapid STP, Flags [Learn, Forward], bridge-id 82bd.8c:60:4f:47:6d:7c.8110, length 43

The only frames received are ethernet-level management frames sent by the switches (STP frames here, but we could also see LLDP frames, or other ones)

In comparison, this is what can be captured on a node in the production VLAN, in only a one second time:

14:27:43.920934 IP paravance-60.rennes.grid5000.fr.38784 > dns.rennes.grid5000.fr.domain: 65121+ PTR? 5.98.16.172.in-addr.arpa. (42) 14:27:43.921384 IP dns.rennes.grid5000.fr.domain > paravance-60.rennes.grid5000.fr.38784: 65121* 1/1/0 PTR parapide-5.rennes.grid5000.fr. (103) 14:27:43.921510 IP paravance-60.rennes.grid5000.fr.49250 > dns.rennes.grid5000.fr.domain: 48890+ PTR? 111.111.16.172.in-addr.arpa. (45) 14:27:43.921816 IP dns.rennes.grid5000.fr.domain > paravance-60.rennes.grid5000.fr.49250: 48890* 1/1/0 PTR kadeploy.rennes.grid5000.fr. (104) 14:27:44.017208 ARP, Request who-has parapide-5.rennes.grid5000.fr tell dns.rennes.grid5000.fr, length 46 14:27:44.201278 IP6 fe80::214:4fff:feca:9470 > ff02::16: HBH ICMP6, multicast listener report v2, 1 group record(s), length 28 14:27:44.201416 IP paravance-60.rennes.grid5000.fr.34416 > dns.rennes.grid5000.fr.domain: 7912+ PTR? 6.1.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.0.2.0.f.f.ip6.arpa. (90) 14:27:44.284641 ARP, Request who-has parapide-9.rennes.grid5000.fr tell kadeploy.rennes.grid5000.fr, length 46 14:27:44.307171 ARP, Request who-has parapide-5.rennes.grid5000.fr tell metroflux.rennes.grid5000.fr, length 46 14:27:44.398978 IP dns.rennes.grid5000.fr.domain > paravance-60.rennes.grid5000.fr.34416: 7912 NXDomain 0/1/0 (160)

Here we see ARP requests, DNS messages, multicast reports…

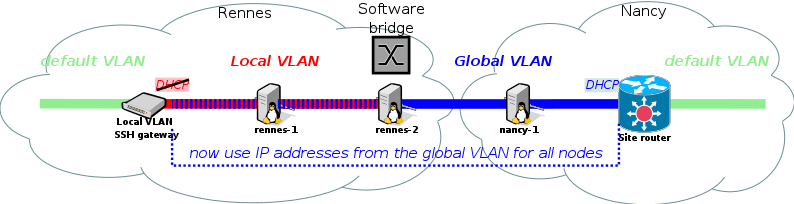

Bridging VLANs with OpenVSwitch

Setup

Our goal is now to allow nancy-1 and rennes-1 to communicate directly at the Ethernet layer, with no IP routing in between. In this configuration, only one IP subnet (range) must be used, so let's chose the global VLAN one, and forget about the local VLAN one.

First, we need to unset what we configured when we were doing IP routing:

- remove the extra IP route to

L_NETWORKonnancy-1 - deactivate the IP routing functionality on

rennes-2 - stop the DHCP service in the local VLAN (so that it does not conflict with the global VLAN one)

- unconfigure both network interfaces of

rennes-2, and setup OpenVSwitch within a Kaconsole session. - restart the networking service of

rennes-1!

Let's detail a little…

- action #1

Just replace add by del in the ip route command.

- action #2

in the sysctl command, set ip_forward to 0 instead of 1

- action #3

- action #4

Run Kaconsole

Login with root / grid5000.

Unconfigure the IP addresses of the network interfaces:

Create the bridge:

Add eno1 and eno2 to the bridge:

You can list all the ports in the bridge with:

- and finally action #5

From kaconsole on rennes-1:

- We are done

rennes-1 should now have an IP of the global VLAN, while still in the local VLAN, with the associated DNS hostname: rennes-1-kavlan-G_ID !

Tests

Now that everything is configured:

- You should be able to ping

rennes-1with its new IP fromnancy-1. - Also if you run

traceroute, you should notice that there is only one hop between the 2 nodes now.

| Note | |

|---|---|

Bridging could be achieved using Linux bridge driver and the | |

Flow control

You can use openVSwitch to manage flows, for example you can DROP all pings to a specific IP from a specific interface:

rennes:rennes-2:

|

ovs-ofctl add-flow OVSbr "in_port=1,icmp,nw_src=G_IP_rennes-1,nw_proto=1,actions=drop" |

As it can be checked with the "ovs-ofctl show" command, "in_port=1" refers to eno1 in our case.

Now, from rennes-1, try to ping nancy-1 and check that it doesn't work, but make sure you can still access it via SSH.

You can also DROP all packets from an IP with this command:

Now make sure you can't SSH from rennes-1 to nancy-1 anymore

Additionally, the following command will display all your flow rules :

| Note | |

|---|---|

If you want to know more about flow syntax go to this man page and look for the "Flow Syntax" paragraph | |

Bonus: beta testing the TopoMaker tool!

The development of a new tool was recently started to automate most of the previous reconfiguration steps.

If you finished the tutorial, please have a look at the TopoMaker page, and beta test !

Conclusions

At this stage, you are now ready to perform SDN (Software Defined Networking) and NFV (Network Function Virtualization) research on Grid'5000. You could:

- configure virtual network overlays using VXLAN on your OpenVSwitch instances

- set up an OpenFlow controller such as POX or NOX to control your OpenVSwitch switches

- set up network functions on reserved nodes (firewalls, load balancers)

Also note that Nancy has nodes with four 10Gbps network interfaces (grisou), which might be useful for more complex setups.