Booting and Using Virtual Machines on Grid'5000

Overview

The goal of this tutorial is to introduce the deployment of a large amount of virtual machines over the Grid'5000 platform.

After a short precision about Grid'5000 specifications and the requirements of this session, users will be presented a set of scripts and tools designed over the Grid'5000 software stack to deploy and interact with a significant number of virtual machines. Our largest experiment and a few related tips are also described.

Once deployed, these instances can then be used at the user convenience in order to investigate particular concerns such as the impact of migrating a large amount of VMs simultaneously or the study of new proposals dealing with VM images management.

Grid'5000 specifications

When booting KVM instances over the platform, we need physical hosts that supports hardware virtualisation.

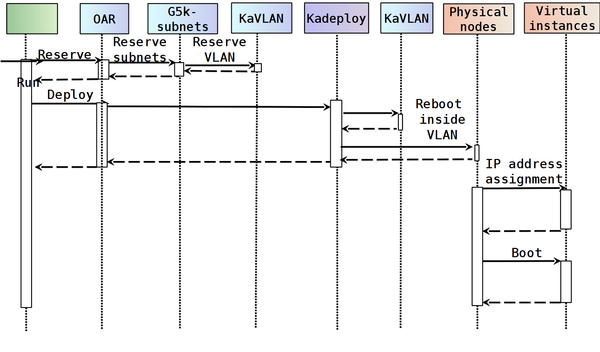

During the deployment, we are in charge of configuring the IP network used by virtual machines throught network isolation capabilities of Grid'5000 and the subnet reservation system. It enables the use of an IP network ranging from /22 to /16 subnets, and ensures the communications between all the instances.

Deployment

Booking the ressources

The first step is to retrieve the last version of the scripts (the dynVM__TEMPLATE__ is located on sophia site)

Move to the folder containing the code

Book the g5k resources according to the desired time and duration of your experiment.

We will now consider as master site the site from which you book the ressources and executes the scripts.

This script will return you an OAR that includes the reservation of the nodes, a virtual network and a subnet

Execute the oar request returned by getmaxgridnodes.sh (don't forget to redirect it as shown in the example)

The master site is sophia in the following example.

Warning : Take care of using clusters/site that offers hardware virtualization support (https://api.grid5000.fr/sid/ui/quick-start.html)

Deploying and configuring the physical machines

Move to the Flauncher directory

Get the list of nodes and then connect the user to job

You are now connected to the grid OAR job

Display the list of nodes (optional)

Move to the Flauncher directory

Deploy the nodes

Deploy the vlan and set the hypervisor (Warning : Use lowercase letter and repeat the master site as the first site)

We use a service node during the process to perform the remote operation on the nodes of the infrastructure.

Retrieve the service node

service_node=$(sed -n '/sophia/p' ./log/machines-list.txt | head -n1)

Connect to the service node

Usage

Retrieving the infrastructure informations

The service node contains all the informations about the deployed infrastructure.

Those informations can be accessed through various getters functions according to the desired informations.

Example : Retrieving the mapping between the reserved IP and the nodes

Please consult the README file for complete description of the available getters.

Creation of the Virtual Machines

Create a single virtual machine on a host

It is possible to create a single virtual machine by using the following script

The creation needs an IP adress(IP) , an amount of RAM memory(MEMORY), the current site and node and a arbitrary ID.

Create all the virtual machines from a service node

When the infrastructure require a large amount of virtual machines with a specific mapping, it is necessary to describe the desired infrasctructure to enable an automatic and remote deployment.

#list of nodes suno-3.sophia.grid5000.fr 4 20 8 suno-6.sophia.grid5000.fr 4 20 8 suno-9.sophia.grid5000.fr 4 20 8 #list of VMs vm00 1 1 1 2147483647 vm01 1 1 1 2147483647 vm02 1 1 1 2147483647 vm03 1 1 1 2147483647 vm04 1 1 1 2147483647 vm05 1 1 1 2147483647 #initial configuration suno-3.sophia.grid5000.fr vm00 vm03 suno-6.sophia.grid5000.fr vm01 vm04 suno-9.sophia.grid5000.fr vm02 vm05 #end of configuration

In this particular configuration file, we declare 3 physical nodes and 6 virtual machines.

The last part of the configuration describes a simple mapping of 2 virtual machines on each node.

(The nodes must designate the physical hostnames of your infrastructure)

We can now create virtual machines on each host according to the specified configuration file, from the service node.

All the virtual instances are started at the same time, using a hierarchical structure among the physical nodes.The correlation between names and IPs

is stored in a dedicated file propagated on each physical node. This allows

us to identify and communicate with all the virtual machines.

At the end of the operation, the instances previously described are booted and available for experiments.

Interaction with the Machines

We need to be able to control,monitor and communicate with both the host OSes and the guest instances spread across the infrastructure at any time.

For that purpose, the following scripts are based on hierarchical communication structures to ensure a large-scale communication with the physical and/or virtual instances.

Execute remote commands

Execute a command on a list of nodes using a tree distribution

Copy files

Copy a file on a list of node using a tree distribution

In Practice : 10240 Virtual Machines on 512 Physical Hosts

Considering that physical machines must support hardware virtualization to start KVM instances, the largest experiment that has been conducted up to now involved 10240 KVM instances upon 512 nodes through 4 sites and 10 clusters. The whole setup is performed in less than 30 minutes with about 10 minutes spent on the deployment of the nodes, 5 minutes for the installation and configuration of the required packages on the physical hosts, while the rest is dedicated to the booting of the virtual machines. The result of that work opens doors to the manipulation of virtual machines thoughout a distributed infrastructure like traditionnal operating systems handle process on a local node.

Useful Tips

Booking grid resources

- Provide the request that retrieves the maximum number of nodes that is available during the time slot defined on specific clusters

Deployments

- Ensure a minimum amount of deployed nodes

To ensure that 95% (rounded down) of the reserved nodes are correctly deployed (3 attempts max), instead of running:

Run:

NB_NODES=$(sort -u $OAR_NODE_FILE | wc -l) MIN_NODES=$(($NB_NODES * 95/100)) /grid5000/code/bin/katapult3 --deploy-env squeeze-x64-prod --copy-ssh-key --min-deployed-nodes $MIN_NODES --max-deploy-runs 3

Communication

- About the Saturation of ARP tables

Tools

- Network isolation over Grid'5000 : KaVLAN

- Booking a range of IP adresses : Subnet Reservation System

- Deployment of nodes : Kadeploy3

- Execution Remote commands : TakTuk