Sophia:Network: Difference between revisions

Nniclausse (talk | contribs) |

Nniclausse (talk | contribs) |

||

| Line 43: | Line 43: | ||

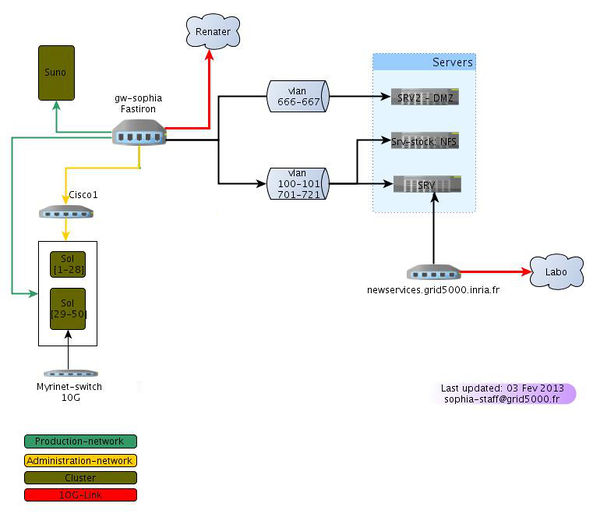

All the nodes are connected (in a non blocking way) to the main Foundry FastIron Super X switch. | All the nodes are connected (in a non blocking way) to the main Foundry FastIron Super X switch. | ||

=== UVA and UVB Clusters === | |||

All the nodes are connected to two stacked Dell PowerConnect 6248 switches. The Powerconnect stack is connected to the Foundry FastIron Super X through a 10Gbps fiber. | |||

=== Topology === | === Topology === | ||

Revision as of 10:46, 30 September 2016

Network Topology

IP networks in use

You have to use a public network range to run an experiment between several Grid5000 sites.

Public Networks

- computing : 172.16.128.0/20

- virtual : 10.164.0.0/14

Local Networks

- admin or ipmi : 172.17.128.0.24

Network

Gigabit Ethernet

Sol Cluster (Sun X2200 M2)

All the nodes are connected (in a non blocking way) to the main Foundry FastIron Super X switch.

47 nodes (from sol-1 to sol-47) have a second Ethernet interface connected to 2 Cisco 3750 stacked switches but they are not activated.

- IP addressing for eth1: sol-{1-47}-eth1, using the 172.16.128.0/24 network ( 172.16.128.154 can be used as a gateway)

The Cisco are interconnected to the main FastIron router with a single 1Gbps link.

Suno Cluster (Dell R410)

All the nodes are connected (in a non blocking way) to the main Foundry FastIron Super X switch.

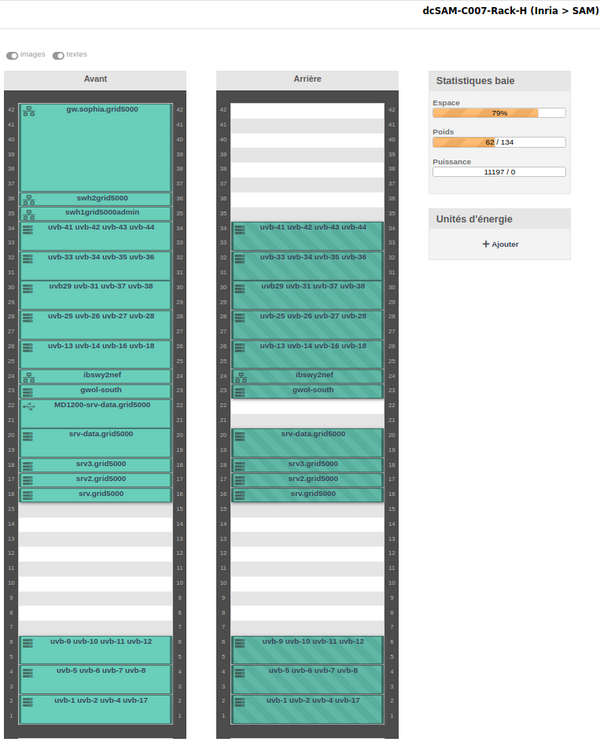

UVA and UVB Clusters

All the nodes are connected to two stacked Dell PowerConnect 6248 switches. The Powerconnect stack is connected to the Foundry FastIron Super X through a 10Gbps fiber.

Topology

The main switch is a Foundry FastIron Super X. It has 2 dual 10GB modules, 4 modules with 24 gigabit ports, and 12 gigabit ports on the management module (so 60 gigabit ports are available). 4 slots are currently free.

High Performance networks

Myri 10G

A subset of the sol cluster (sol-[29-50]) is connected to a 10G Myrinet switch.

Infiniband 40G on uva and uvb

uva and uvb cluster nodes are all connected to 40G infiniband switches. Since these two clusters are shared with the Nef procution cluster at INRIA Sophia, we are using Infiniband partitions to isolate the nodes from nef when they are available on grid5000. The partition dedicated to grid5000 is 0x8108. The ipoib interfaces on nodes are therefore named ib0.8100 instead of ib0. (to use the native openib driver of openmpi, you must use: btl_openib_pkey = 0x8108 )

Nodes

uva-1touva-13anduvb-1touvb-44have one QDR Infiniband card.- Card Model : Mellanox Technologies MT26428 [ConnectX IB QDR, PCIe 2.0 5GT/s].

- Driver :

mlx4_ib - OAR property : ib_rate=40

- IP over IB addressing :

uva-[1..13]-ib0.sophia.grid5000.fr ( 172.18.131.[1..13] )uvb-[1..44]-ib0.sophia.grid5000.fr ( 172.18.132.[1..44] )

Switch

- three Mellanox IS50xx QDR switch Infiniband Switch

- Topology available here : https://wiki.inria.fr/ClustersSophia/Network (uva and uvb are nef084-nef140 on the nef production cluster)

Interconnection

Infiniband network is physically isolated from Ethernet networks. Therefore, Ethernet network emulated over Infiniband is isolated as well. There isn't any interconnexion, neither at the data link layer nor at the network layer.