Grenoble:Network: Difference between revisions

(Add IB network) |

No edit summary |

||

| Line 17: | Line 17: | ||

* Switch: Mellanox SB7700 IB EDR / 100G | * Switch: Mellanox SB7700 IB EDR / 100G | ||

* Host adapter: Mellanox MT27700 [ConnectX-4] dual-port | * Host adapter: Mellanox MT27700 [ConnectX-4] dual-port | ||

* Each | * Each of the 12 drac nodes has two 100G connections to the switch | ||

== Omni-Path 100G network == | == Omni-Path 100G network == | ||

Revision as of 19:17, 3 February 2021

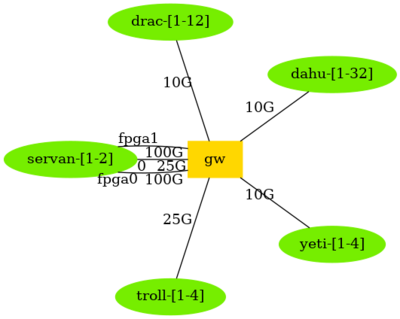

Overview of Ethernet network topology

Network devices models

- gw: Dell S5296F-ON

- imag-1b-F1-admin-01: Aruba R9W97A 8100-40XT8XF4C switch

- imag-1b-F1-prod-01: Aruba JL719C 8360-48Y6C v2 Switch

- imag-1b-F2-admin-01: Aruba R9W97A 8100-40XT8XF4C switch

- imag-1b-F2-prod-01: Aruba JL719C 8360-48Y6C v2 Switch

- imag-1b-F3-admin-01: Aruba R9W97A 8100-40XT8XF4C switch

- imag-1b-F3-prod-01: Aruba JL719C 8360-48Y6C v2 Switch

- opa-grenoble: Intel Omni-Path Edge Switch 100 Series/H1048-OPF

- skinovis2-admin-01: Dell PowerConnect 6248

- skinovis2-prod-01: N9K-C93360YC-FX2

- sw-ib-mellanox: Mellanox IB switch

More details (including address ranges) are available from the Grid5000:Network page.

HPC Networks

Infiniband 100G network

An Infiniband 100G network interconnects nodes from the drac cluster. It has a single switch.

- Switch: Mellanox SB7700 IB EDR / 100G

- Host adapter: Mellanox MT27700 [ConnectX-4] dual-port

- Each of the 12 drac nodes has two 100G connections to the switch

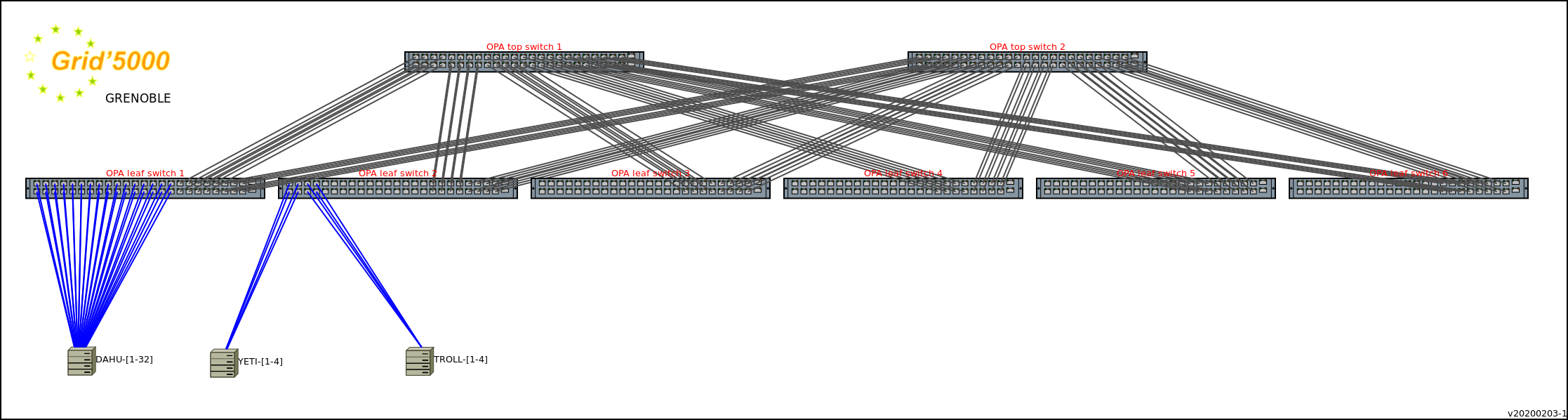

Omni-Path 100G network

Nodes of the dahu, yeti and troll clusters are connected to Omni-Path switches (Intel Omni-Path 100Gbps), beside the Ethernet network.

This Omni-Path network interconnects both the Grid'5000 Grenoble nodes and the nodes of the HPC Center of Université Grenoble Alpes (Gricad mésocentre).

The topology used is a fat tree with a 2:1 blocking factor:

- 2 top switchs

- 6 leaves: switchs with 32 downlinks to nodes and 8 uplinks to each of the two top switches (48 ports total)

All 32 dahu nodes are connected to a same leaf. The 4 yeti nodes are connected to another leaf. TBC for troll.

Other ports are used by nodes of the HPC center of UGA.

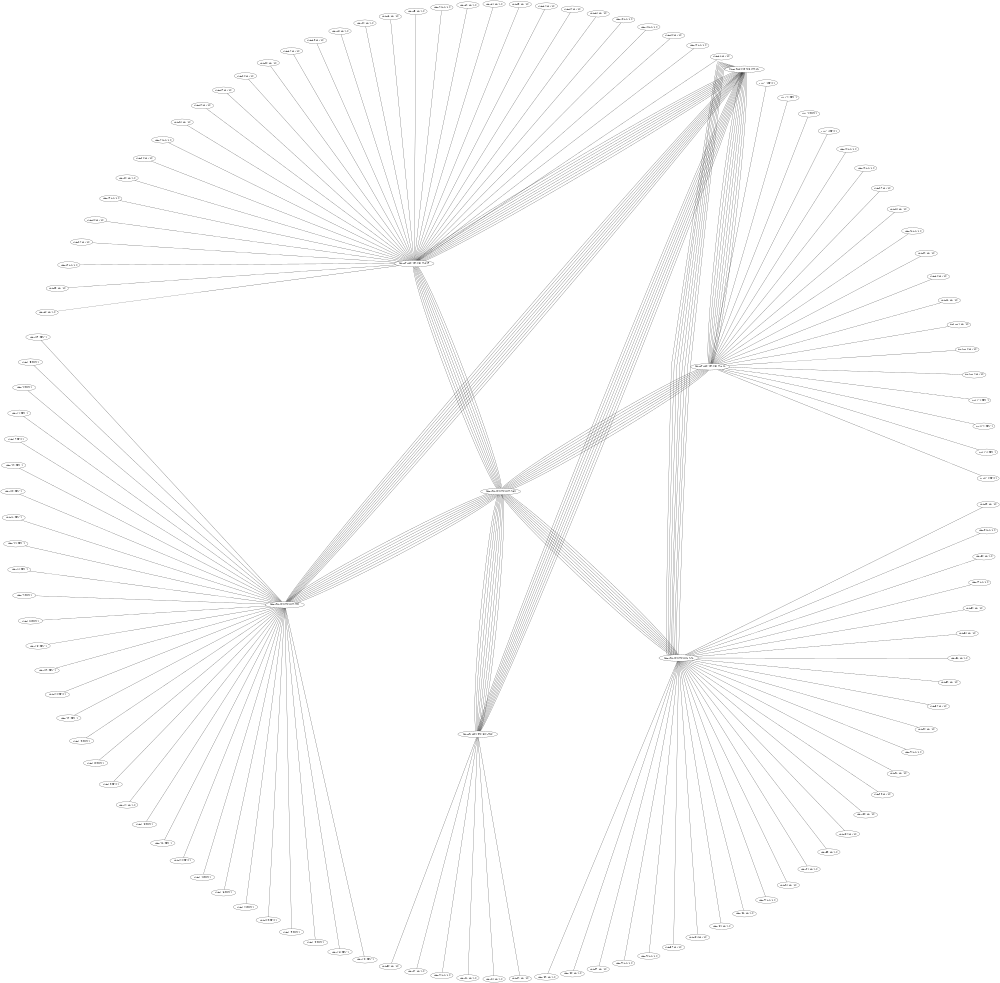

A generated topology (using the output of opareports -o topology :

IP Range

- Computing : 172.16.16.0/20

- Omni-Path : 172.18.16.0/20

- Virtual : 10.132.0.0/14